Notice: This Wiki is now read only and edits are no longer possible. Please see: https://gitlab.eclipse.org/eclipsefdn/helpdesk/-/wikis/Wiki-shutdown-plan for the plan.

SMILA/Documentation/CrawlerController

Contents

Overview

The CrawlerController is a component that manages and monitors Crawlers. Whenever a new crawl is triggered (via startCrawl()) a new instance of the used Crawler is created and the crawler object hash value is used as an id (called import run id) to identify records created by this crawler instance. This import run id is set as an attribute _importRunId on all records and is also visible on the crawler instance in the JMX console.

API

Current javadoc:

- org.eclipse.smila.connectivity.framework.CrawlerController

- org.eclipse.smila.connectivity.framework.util.CrawlerControllerCallback

Implementations

It is possible to provide different implementations for the CrawlerController interface. At the moment there is one implementation available.

org.eclipse.smila.connectivity.framework.impl

This bundle contains the default implementation of the CrawlerController interface.

The CrawlerController implements the general processing logic common for all types of Crawlers. Its interface is a pure management interface that can be accessed by its Java interface or its wrapping JMX interface. It has references to the following OSGi services:

- Crawler ComponentFactory

- ConnectivityManager

- DeltaIndexingManager (optional)

- CompoundManager

- ConfigurationManagement (t.b.d.)

Crawler Factories register themselves at the CrawlerController. Each time a crawl for a certain type of crawler is initiated, a new instance of that Crawler type is created via the Crawler ComponentFactory. This allows parallel crawling of datasources with the same type (e.g. several websites). Note that it is not possible to crawl the same data source concurrently!

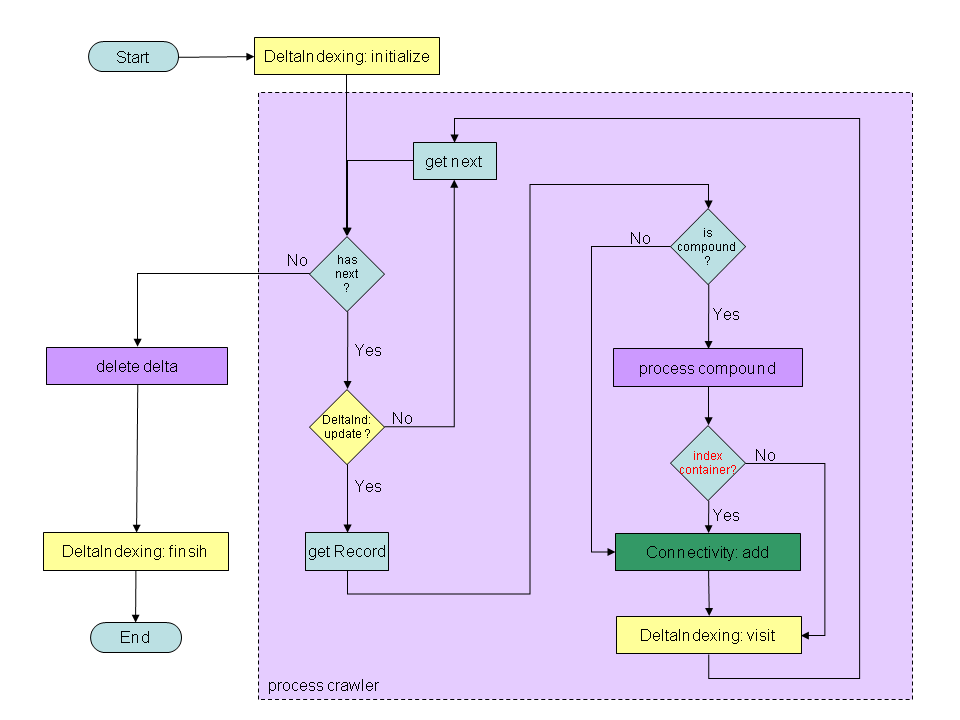

This chart shows the current CrawlerController processing logic for one crawl run:

- First the CrawlerController initializes DeltaIndexing for the current data source by calling DeltaIndexingManager::init(String) and also initializes a new Crawler (not shown)

- the then executes subprocess process crawler with the initialized Crawler

- if no error occured so far it performs the subprocess delete delta

- finally it finishes the run by calling DeltaIndexingManager::finish(String)

- Process Crawler

- the CrawlerController checks if the given Crawler has more data available

- YES: the CrawlerController checks each received DataReference send by the Crawler if it needs to be updated by calling DeltaIndexingManager::checkForUpdate(...)

- YES: the CrawlerController request the complete record from the Crawler and checks if the record is a compound

- YES: the subprocess process compounds is executed.

- NO: no special actions are taken

- the record is added to the Queue by calling ConnectivityManager::add(...) and is marked as visited in the DeltaIndexingManager by calling DeltaIndexingManager::visit(...)

- NO: the DataReference is skipped. DeltaIndexingManager internally already set the visited flag for this Id

- YES: the CrawlerController request the complete record from the Crawler and checks if the record is a compound

- NO: return to the calling process

- Process Compounds

Please see CompoundManagement for details on compound handling.

- by calling CompoundManager:extract(Record, DataSourceConnectionConfig) the subprocess receives a CompoundCrawler that iterates over the elements of the compound record

- the subprocess recursively calls subprocess process crawler using the CompoundCrawler

- the compound record is adapted according to the configuration (set to null, modified, left unmodified) by calling CompoundManager:adaptCompoundRecord(Record, DataSourceConnectionConfig)

- return to the calling process

- Delete Delta

- by calling DeltaIndexingManager::obsoleteIdIterator(...) the subprocess receives an Iterator over all Ids that have to be deleted

- for each Id ConnectivityManager::delete(...) is called

- return to the calling process

- Note

- The exact logic depends on the settings of DeltaIndexing in the data source configuration. Depending on the configured value, delta indexing logic is executed fully, partially or not at all.

Configuration

There are no configuration options available for this bundle.

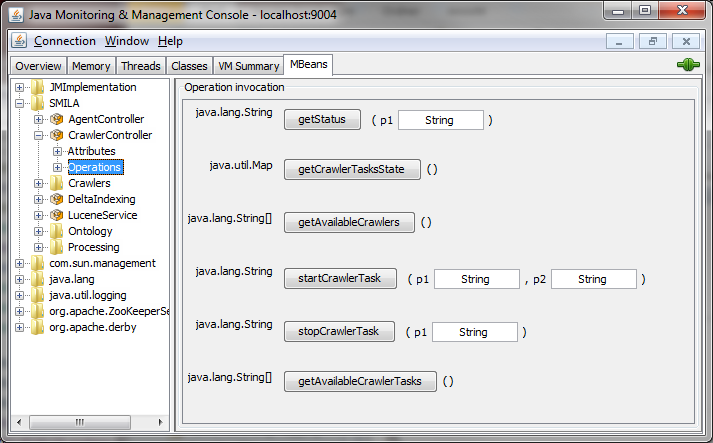

JMX interface

Javdoc: org.eclipse.smila.connectivity.framework.CrawlerControllerAgent

Here is a screenshot of the CrawlerController in the JMX Console:

HTTP ReST JSON interface

Since version 0.9 the CrawlerController can also be controlled via the SMILA ReST API. It provides the following endpoints:

| endpoint | method | description |

|---|---|---|

| /smila/crawlers | GET | list data sources available for crawling and the current crawl state |

| /smila/crawlers/<datasource-id> | GET | get statistics of current or last crawl run, if one exists. |

| /smila/crawlers/<datasource-id> | POST + JSON-Body | start crawler |

| /smila/crawlers/<datasource-id>/finish | POST | stop crawler |

Crawler Datasource Listing

The listing contains the available data sources that can be used for crawling and the current crawl state. State "Undefined" means that no crawl run for the datasource has yet been started. Other states can be

- Running: A crawler is current working on this datasource.

- Finished: The crawler has crawled the datasource completely.

- Stopped: The crawler was stopped by the user before it could finish to crawl the datasource.

- Aborted: A fatal error occurred while crawling the datasource.

If the state has one of these four values, it is possible to read statistics for the datasource by using the given URL. Example:

GET /smila/crawlers/ --> 200 OK { "crawlers": [ { "name": "web", "state": "Undefined", "url": "http://localhost:8080/smila/crawlers/web/" }, { "name": "file", "state": "Finished", "url": "http://localhost:8080/smila/crawlers/file/" }, { "name": "xmldump", "state": "Undefined", "url": "http://localhost:8080/smila/crawlers/xmldump/" } ] }

Start a Crawler

If a datasource is not in crawl state "Running" it can be started using the URL given in the datasource listing. The request must contain a JSON body describing the destination job to submit records to. In case of success the response contains the internal import run ID.

POST /smila/crawlers/file/ { "jobName": "indexUpdateJob" } --> 200 OK { "importRunId": 1992135396 }

Other response codes:

- 400 Bad Request: datasource ID does not exist, destination job is not active, datasource is not a crawler source or a crawler is already running for the datasource.

- 500 Internal Server Error: Ohter errors.

Get Crawler Statistics

If a datasource has been crawler or is currently crawler you can read the performance counters using the datasource URL:

GET /smila/crawlers/file/ --> 200 OK { "jobName": "job", "attachmentBytesTransfered": 0, "attachmentTransferRate": 0, "averageAttachmentTransferRate": 0, "averageDeltaIndicesProcessingTime": 0, "averageRecordsProcessingTime": 0, "deltaIndices": 569, "endDate": "2011-09-06", "errorBuffer": "[]", "exceptions": 0, "exceptionsCritical": 0, "importRunId": "786625416", "overallAverageDeltaIndicesProcessingTime": 10.06854130052724, "overallAverageRecordsProcessingTime": "Infinity", "records": 0, "startDate": "2011-09-06", "files": 0, "folders": 0, "producerExceptions": 0, "dataSourceId": "file", "state": "Finished" }

Other responses are

- 400 Bad Request: Invalid datasource ID

- 404 Not Found: No statistics available for given datasource

- 500 Internal Server Error: Other error.

Stop a Crawler

To stop a running crawler, use the following HTTP request. The response will be empty, just the response code will be "OK".

POST /smila/crawlers/file/finish/ --> 200 OK

Other responses are:

- 400 Bad Request: No crawler is running for this datasource.

- 500 Internal Server Error: Other errors.

,